Parallel Meshing and Remeshing | Efficient Model Optimization

In the latest release, several advancements were added to speed up the generation of parallel meshes in Ansys Workbench. For those users that build large models, one new feature will be especially helpful in saving time.

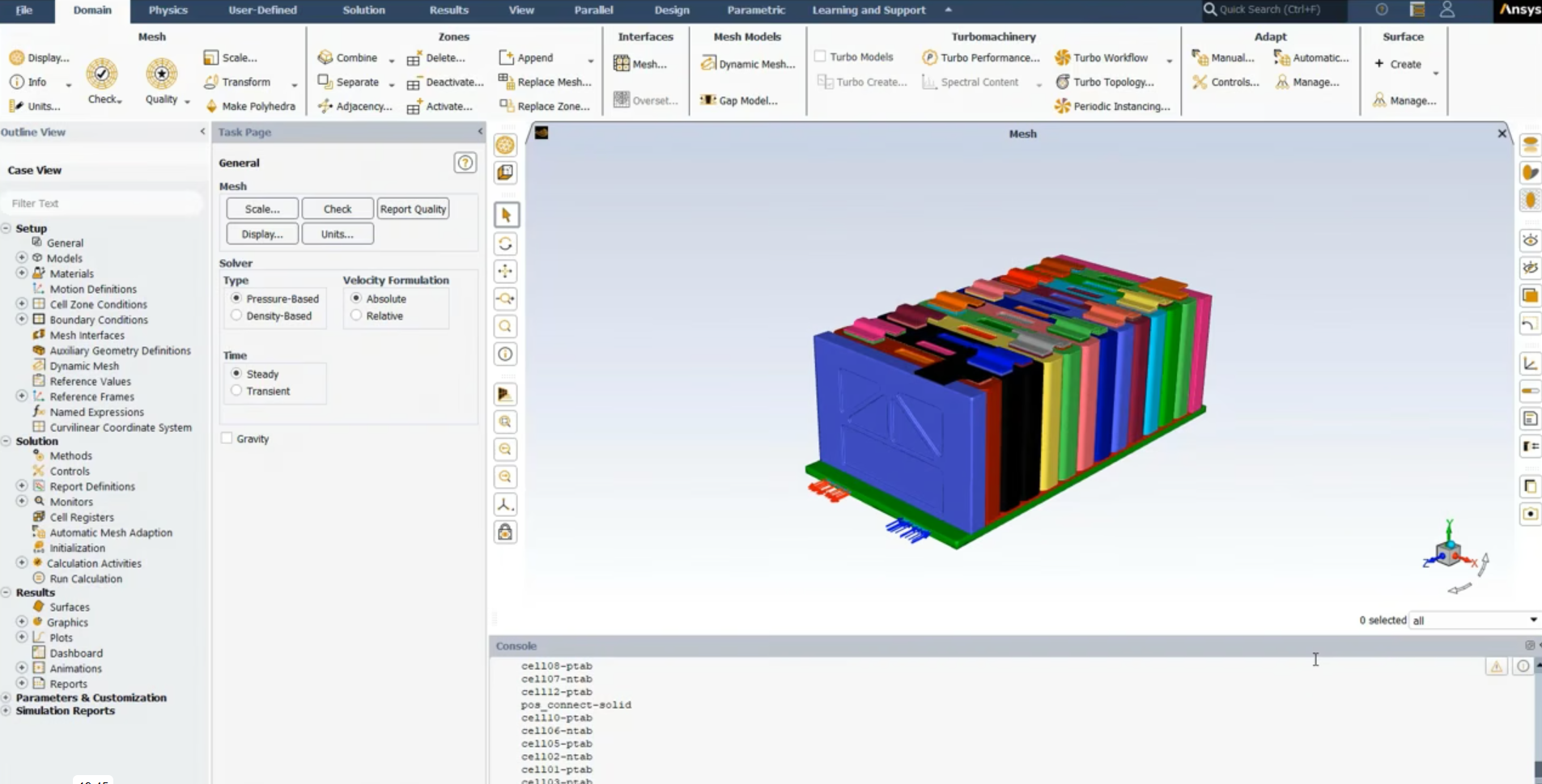

For the first time, Ansys is able to use multiple CPUs to generate a mesh WITHOUT ANY ADDITIONAL LICENSES. Ansys will attempt to divvy the geometry up between the cores so that more of the computer resources is used during this part of the analysis process. The speed-up is especially dramatic for models with lots of bodies connected together with contact regions.

Breaking up the Model Increases Speed

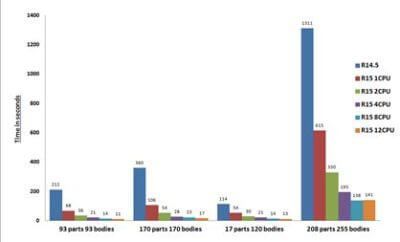

Since the mesh on individual bodies do affect each other, this allows Ansys to break the model into many pieces thus utilizing all cores. If the model is made up of a single multi-body part, the entire part will need to be sent to one CPU so the speed increases may not be fully realized. The image below shows a sampling of the speed increases that were witnessed by Ansys using several customer models.

By default, the program will attempt to use all cores on the machine. One requirement is that each core will need to have at least 2 GB of RAM available for its use. So, if the computer has 4 cores, a minimum of 8 GB of RAM will need to be on the computer.

As an added bonus, other mesh methods (MultiZone Quad/Tri, Patch Independent Tetra, and MultiZone) will be able to use multiple cores as well.

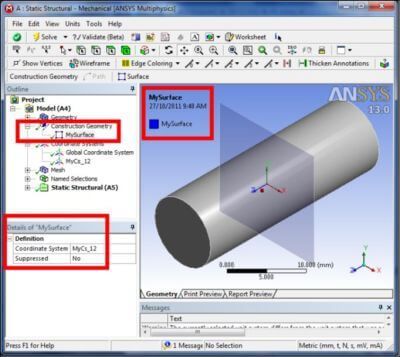

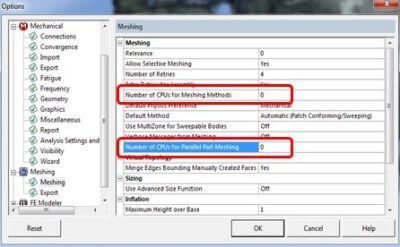

The Number of CPUs that are available to Ansys can be specified in two places in Tool>>Options>>Meshing>>Meshing. The picture below shows the location in the Options menu.

One setting provides the number that can be used for the various mesh methods and the other will set the number that can be used for parallel part meshes. By default, both of these are set to 0 which will permit Ansys to use all cores on the computer. If you would actually like to keep working, a specific number can be entered instead.

We hope you take advantage of this new advancement. For more on remeshing, click HERE. Happy Meshing, Everyone!

Parallel Meshing Products by Ansys

What is Parallel Meshing in Ansys?

The production and modification of surface and volume meshes in a distributed parallel environment is made possible by Ansys’ parallel meshing technology. By employing functional interfaces between analysis and meshing, these capabilities enable you to perform your large-scale adaptive simulations entirely on a single parallel machine without the use of pricy serialization or disk I/O.

All internal, automatic domain decomposition and load balancing occur during parallel meshing. The parallel partitioned mesh is always associative with the real CAD model and adheres to geometric boundaries, just like serial meshing.

Additionally, multithreaded meshing is separately accessible to utilize all of your computer’s cores and hasten the mesh generation and adaptation processes.

Capabilities for Parallel Meshing in Ansys

- During mesh formation and adaption, there is automatic partitioning and load balancing.

- Complete adherence to the actual CAD geometry.

- The majority of related serial capabilities include parallel support.

- On tens of thousands of processors, billions of elements worth of meshes have been created and modified.

- Any MPI implementation is supported (MPICH, OpenMPI, MS-MPI, your own).

- To execute large scale adaptive simulations without bottlenecks, there is a rich collection of data structures and API functions linked to partitioned mesh.

- Trap wing in high lift configuration with initial and modified meshes may run concurrently, including in-plane boundary layer adaptation.

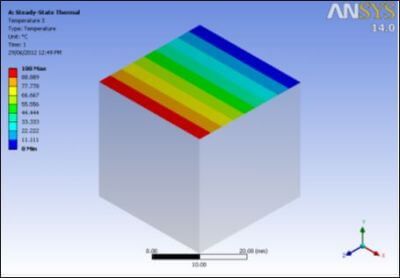

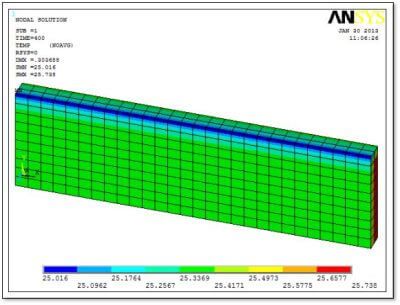

Parametric Mesh Creation

For a specific wing geometry, over 20 processors can be used to create a mesh, simultaneously. On various processors, colors signify mesh components. A sliced view of a wing’s surface mesh and a 3D boundary layer mesh are then available for display for engineers. Up to 2 million total elements in a given mesh can be found within a boundary layer. For a practical analysis, a much finer mesh can than be crafted, while a coarser mesh (for rapid simulations) may be displayed so the connectivity can be readily observed.